posted: May 11, 2019

tl;dr: The full stack concept has been around for decades, but the definition has changed considerably...

Although I don’t recall the term being used at the time, the concept of being a full stack developer has been around since I was in college in the 1980s. Back then it meant being able to design the hardware of a computer or computer-based system and also being able to write all the software that ran on that hardware. A desire to learn as much as possible about the entire stack is one of the key reasons I chose the major and courses that I did.

Early in my career I worked on several full stack projects, by that definition. One of them was the Inmar echoBOX, where I got to design the hardware and then write all the embedded software in assembly language. I enjoyed being able to work on both the hardware and software, but even then it was an exaggeration to say I was working at all layers of the stack. I hadn’t designed the integrated circuits, in particular the 8-bit microprocessor. Some other team of developers at Motorola was responsible for that key layer of the overall stack.

I got an inkling of what was happening to the hardware design profession back then: software was creeping into hardware design, to handle the increased complexity and component density driven by Moore’s Law. Hardware circuit design was moving from using pencils, paper, and templates to draw schematics of interconnected individual components, to using computer-aided design tools. As an intern at Teradyne, I was one of the first guinea pigs to test out the first schematic capture software system the company had purchased. My project was non-critical, so they didn’t care if it failed due to tool issues, but it succeeded, which opened the eyes of the more experienced designers. It was time to put down their pencils.

In my second stint at Teradyne I wrote code for a pure software-based logic simulation tool called Teradyne LASAR, which simulated the timing of large custom digital circuits to see if they would work before being built. The number of transistors on integrated circuits was growing exponentially thanks to Moore’s Law, so chip design shifted to designing hardware circuits in software-based through the use of hardware description languages such as Verilog and VHDL. Even printed circuit board design shifted into the software realm with automated layout tools and auto-routers. Over time, in the hardware realm, it became impossible to design all the layers for anything but the simplest designs and products.

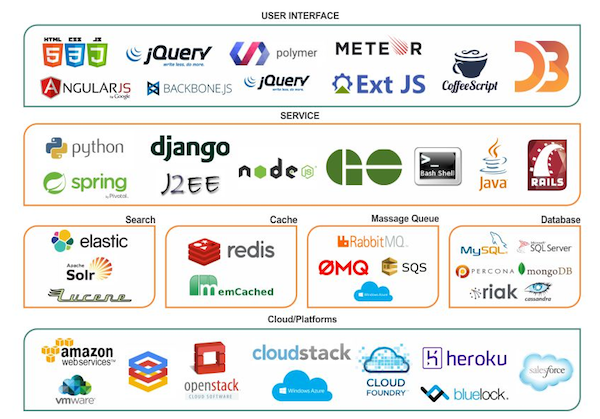

Even this full stack diagram glosses over some of the layers of the overall stack

On the software side complexity also grew dramatically over the years. When I started in my career it used to be possible to write your own operating system for an embedded system entirely from scratch. Even off-the-shelf real-time operating systems like VxWorks were pretty simple to use, initially. There wasn’t much to MS-DOS, and early Windows application programming was straightforward, using the Microsoft C/C++ compiler and some operating system calls for managing the user interface and storing data on disk.

Then came networking and the Internet, which grew into a huge new layer of its own if you really wanted to understand the routing protocols. Operating systems bulked up, to add networking functionality. Different application frameworks appeared. The Web came along, and then web applications, with Hotmail as one of the first. This created two new specialties: developing the client side of web applications in the browser, and developing the server-side functionality back in the cloud.

Today this is what the term “full stack” usually means. But both the back end and the front end continue to grow in complexity. To master the back end you need to know data bases, cloud services, microservices, authentication, security, privacy, and a variety of deployment methodologies: bare metal servers, virtual machines, containers, and now serverless. The front end now has a plethora of devices (mobile and desktop, to cite just the two primary ones) and many frameworks, and must also deal with responsiveness, accessibility, and analytics. Today JavaScript is the primary front end programming language, but Web Assembly is now allowing other languages to be used. There’s even an argument being made that the front end by itself is too large for one person to master, and that there is a great divide between the design aspects of the front end and the programming aspects.

It’s impossible to master it all: there are just too many layers of the stack. There is a bootcamp school called the FullStack Academy, but the syllabus that they teach is a simplification of reality. They use JavaScript on both the front end and the back end, which is certainly a very valid way to build web apps, but it glosses over the reality of the many different languages in use on the server side: Python, PHP, Ruby, Go, C#, Java, SQL, and others. And they don’t bother covering the other lower levels of the stack, such as the networking layer, the operating system layer, or (shudder) the hardware layer. That would take far too long.

Being a full stack developer has always been more of an ideal than a reality. That’s to be celebrated, actually: it is a sign of success of the industry. The body of knowledge has grown far beyond the capabilities of any one person to master it all.