posted: June 22, 2019

tl;dr: The first and last step in any data programming task is to look at the data...

Before starting on any programming task involving data, I always spend a considerable amount of time looking at the data. I won’t write a line of code until I’ve thoroughly analyzed, by visual inspection, the data that I am dealing with. Most ETL (Extract, Transform, and Load) data jobs, outside the realm of data science, tend not to require sophisticated programming techniques. Rather, the main qualities required of the programmer are fastidiousness, attention to detail, and tenacity. A data job isn’t truly complete until all the corner cases are properly handled.

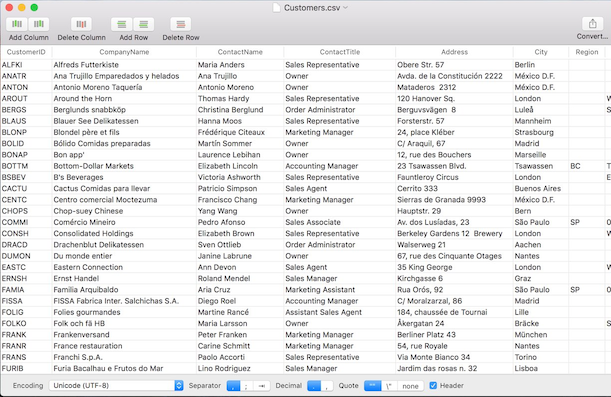

If the data is coming from a database, I’ll connect to the database and look at the tables, columns, and rows by writing some queries. If it is from a spreadsheet, I’ll fire up the spreadsheet program, or if it is from a CSV file I’ll view it with a CSV file reader. If the data is coming directly from user input, such as from a webform or a user interface, I’ll look at some prior user entries, if they exist.

Over the years I’ve learned to look for many potential pitfalls in the data. Some of the questions I attempt to answer are:

Table Tool is a decent CSV file viewer for MacOS

This assessment tells me what problems exist in the data. Some of these may need to be corrected at the source, and some may be able to be cleaned up in code.

Then, still before I write a line of code, I’ll run some records through the desired “transform” algorithm in my head to see if the results look good. Sometimes the transform algorithm doesn’t work when applied to the real-world data and will need to be modified. If you jump too quickly into coding an algorithm that doesn’t actually work with the given data, you’ll just end up with a large number of bad results and wasted coding effort.

Finally, after determining that the algorithm might actually work, and figuring out how I’ll need to clean up the data, I’ll write the code. This is the fast, easy, and fun part. When I write the code, I’ll put in a bunch of counters to keep track of how many records fall into certain categories, as well as some print/log statements for certain rare cases to see if they actually occur. These counters and log statements are one way to know that the code is actually working as expected. They can also be used for monitoring the job during future runs.

I’ll start by running a small number of records through my code and closely inspecting the results. Only when I have confidence that a small set of resulting records look good will I increase the sample size. I’ll typically bump it up in steps of an order of magnitude or two before I launch a job that processes all the records.

One other test that I like to run, especially when dealing with data jobs that take input from users, is what I call the emoji test 😀. Unicode encoding issues may exist somewhere across the multiple systems in the data path. To surface these I’ll put in some records with emoji characters in the text fields and see if they make it all the way through.

This methodology has worked for me. The most important step, both at the beginning and end of writing any data job, is to look closely at the data.

Related post: Play with the data

Related post: Count the data

Related post: De-duplicating database records